Contemporary Production: The World Of MPE Controllers

These days, it’s easier than ever to completely control the expression of your MIDI data with a wide variety of often quirky, somewhat futuristic creative instruments. Erin Barra delves deep into the world of MPE controllers… In my neverending quest to access and explore expressive technology, I want to focus on MPE data. I was […]

These days, it’s easier than ever to completely control the expression of your MIDI data with a wide variety of often quirky, somewhat futuristic creative instruments. Erin Barra delves deep into the world of MPE controllers…

In my neverending quest to access and explore expressive technology, I want to focus on MPE data. I was first introduced to MPE in late 2015 when I started messing around with a ROLI Seaboard and then, a few months later, found myself creating tutorial video content for that same company.

Shortly thereafter, I had the pleasure of befriending Roger Linn, who created the MPE-driven LinnStrument, as well as many other pivotal pieces of tech we all know and love, and had several lengthy conversations with him about MPE and music. Lately, I’ve been seeing even more MPE-driven technology, such as the Instrument 1 by Artiphon, as well as other hardware and software developers becoming MPE compatible. There’s obviously a trend happening here and it’s one I’m simultaneously fascinated and frustrated by.

As an educator, one of the things that I find frustrating is the learning curve in understanding and controlling MPE driven tech, so the first question I’d like to address here is: ‘What is MPE?’ MPE stands for Multidimensional Polyphonic Expression, although the MIDI Manufacturers Association (MMA) seems to be calling it MIDI Polyphonic Expression. One of the obstacles in understanding MPE is that, in order to grasp it, you have to also grasp the basics of how the MIDI protocol itself works.

If you’re like me, you never really bothered to learn how MIDI data gets sent from your controller to your hardware and software instruments, because like a mobile phone, it just works. But then the day comes when you find yourself needing to send or receive MIDI data on different channels and you spend the next three hours combing the internet for answers…

To very simply explain MIDI data, it’s the language that allows your control surfaces, such as a MIDI keyboard or grid controller, to communicate with your virtual and hardware instruments. You play a note on your controller and it sends MIDI data to the instrument, telling it things like which note to play and for how long.

These messages are sent in a very specific and restricted order, which was developed in the early 80s and hasn’t been changed since. All modulation (except for aftertouch) is global, meaning that any sort of parameter change, such as pitch bend, applies to any and all notes being played at any given time.

Imagine yourself holding down a chord and then adjusting one of the mod wheels on the left side of your keyboard controller. All notes in the chord would be modulated the same amount in the same direction. MPE, on the other hand, while still having a very specific way of working, is far less restricted and instead of sending only global modulation messages, it sends all this modulation information on a per-note basis.

So that same chord could have just the top note bending up to a higher interval while the others stay put, or perhaps the bottom note’s filter cutoff opens up and brightens while the others are closing. MPE is still sending MIDI messages, but on all channels simultaneously.

These per-note modulations are associated with some sort of a gesture, such as a left to right, up and down or back and forth movement, as well as taking into consideration how velocity and release velocity might also affect modulation. There’s a deep physical connection between how the user moves and the resulting sounds. To create a metaphor, the MIDI protocol is a primitive culture with limited language skills.

MPE, on the other hand, is a very sophisticated culture with a language capable of expressing detailed and elaborate thoughts. The rub here is that MPE is living in a MIDI world, so it has to find workarounds and in some cases, dumb itself down in order to communicate with others. For instance, if you send MPE messages to a synth or DAW that won’t receive them, it either glitches or just won’t acknowledge those messages.

In response, users either have to restrict themselves to playing one note at a time, or create several tracks, each with an instance of the desired VI dedicated to the messages associated with a single note. Or else turn off the MPE messaging altogether, rendering it back to a traditional MIDI controller. As more and more tech comes out which is MPE compatible, these issues are dissipating, but the evolution is a slow one.

![]()

Is this for you? MPE is expressive, and when it comes down to it, isn’t that what making music is all about? This technology is far from perfect and there’s a lot more development that lies ahead, but for those of you who are all about the instrumental ‘feel’, literally and figuratively, MPE is for you.

Express yourself

The next question I’d like to address is: ‘Why would I want to use MPE?’ which also begs the question: ‘Who uses MPE?’. I see a lot of people using new and exciting tech just for the sake of using it, as opposed to doing so for a musical reason, so this question is an important one. MPE isn’t for everyone, but it is for people who want to be as expressive as possible when playing electronic instruments.

Those people are typically instrumentalists, whether that be in a traditional sense or otherwise, composers, producers, sound designers, or any combination thereof. Since there’s a gestural element to how MPE works, there’s a deep connection between the player, the instrument and the sound source.

In a way, you’re literally putting your hands on a sound, and it’s reacting to you. For being such forward-thinking technology, the correlations between MPE instruments and traditional instruments like a violin or guitar are uncanny. It’s almost as if we’re circling the wagons back to an earlier time when instrumental proficiency was king.

If you’re an instrumentalist who’s into digital technologies, you’re going to have a blast integrating MPE into your toolbox, both onstage and in the studio. If you score films, you’re going to be so changed by the effect MPE will have on your work that going back to a regular MIDI controller will feel wrong. If you’re a sound designer, the possibilities MPE unlocks will elevate what you’re capable of.

When it comes to MPE, the right user paired with the right controller can result in beautiful music. That being said, since there’s such a performative aspect to MPE, controlling the tech does require a certain amount of technical and musical acumen, so it’s not for everyone. Once you wrap your mind around MPE and how it works, you might find yourself asking questions like: “How do I edit MPE performances?”, or: “How do I get my favorite VSTs to communicate with my MPE controller?”.

Admittedly, there are a lot of ‘how’ questions which come up when people start falling down the MPE rabbit hole and the answer to almost all of them is: “It’s complicated.” If all you want to do is plug and play, then you’ll find very little resistance on the path between you and your expressive ideas. On the other hand, if you want to start customising your experience, you’ll run into some technical hurdles you’ll have to clear.

Most MPE controllers come with a dedicated sound engine or a list of suggested engines which they will easily communicate with. So what do you do if you want to use something like NI’s Massive or the ever popular Serum, neither of which currently support MPE, in conjunction with your MPE controller? The answer is that it depends on which DAW and VST you’re using. Most developers and manufacturers will have information on their respective sites which either walk you through the process or deter you from doing so in the first place. Either way, I’ve found it to be clunky and a lot of work.

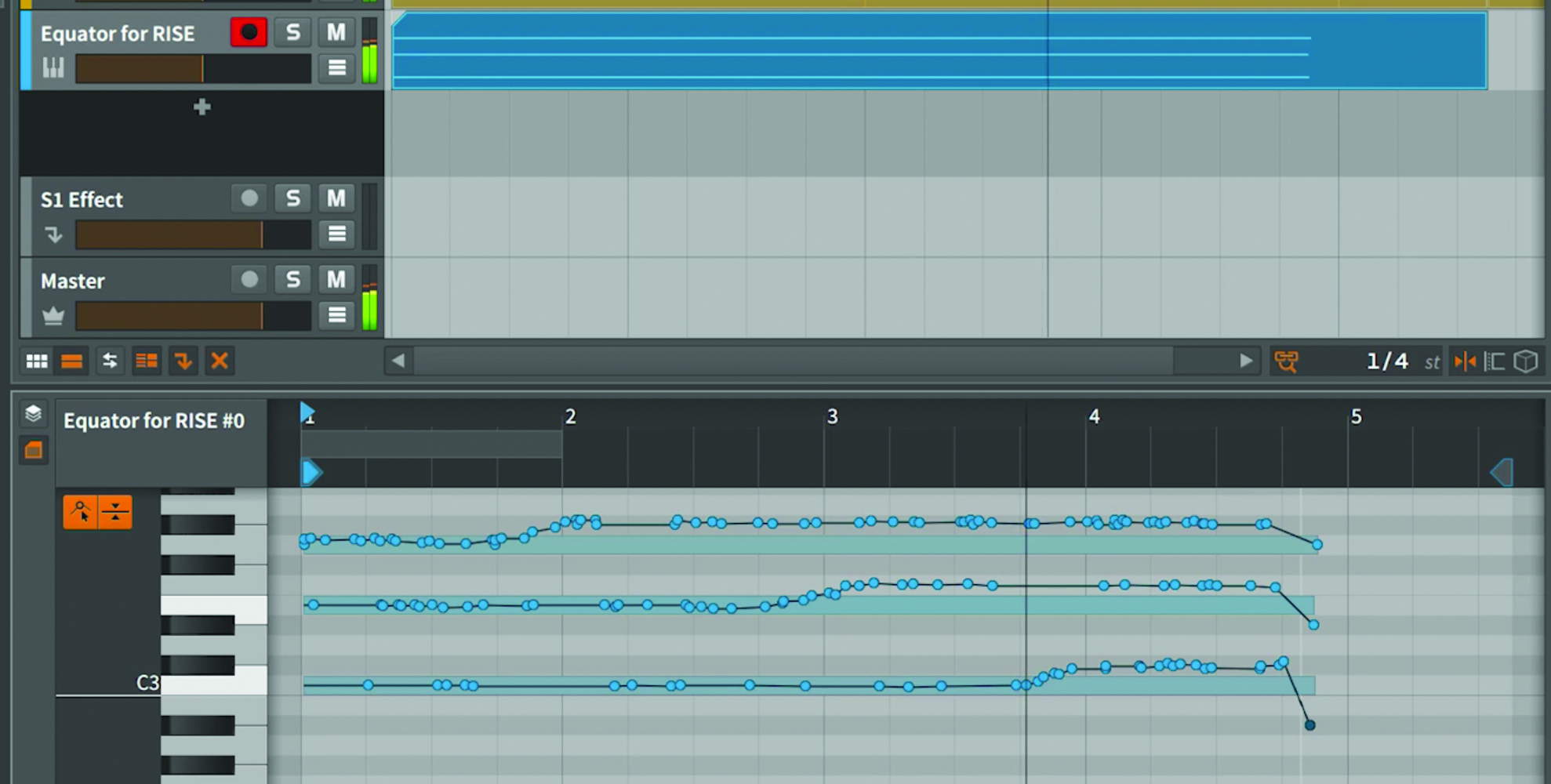

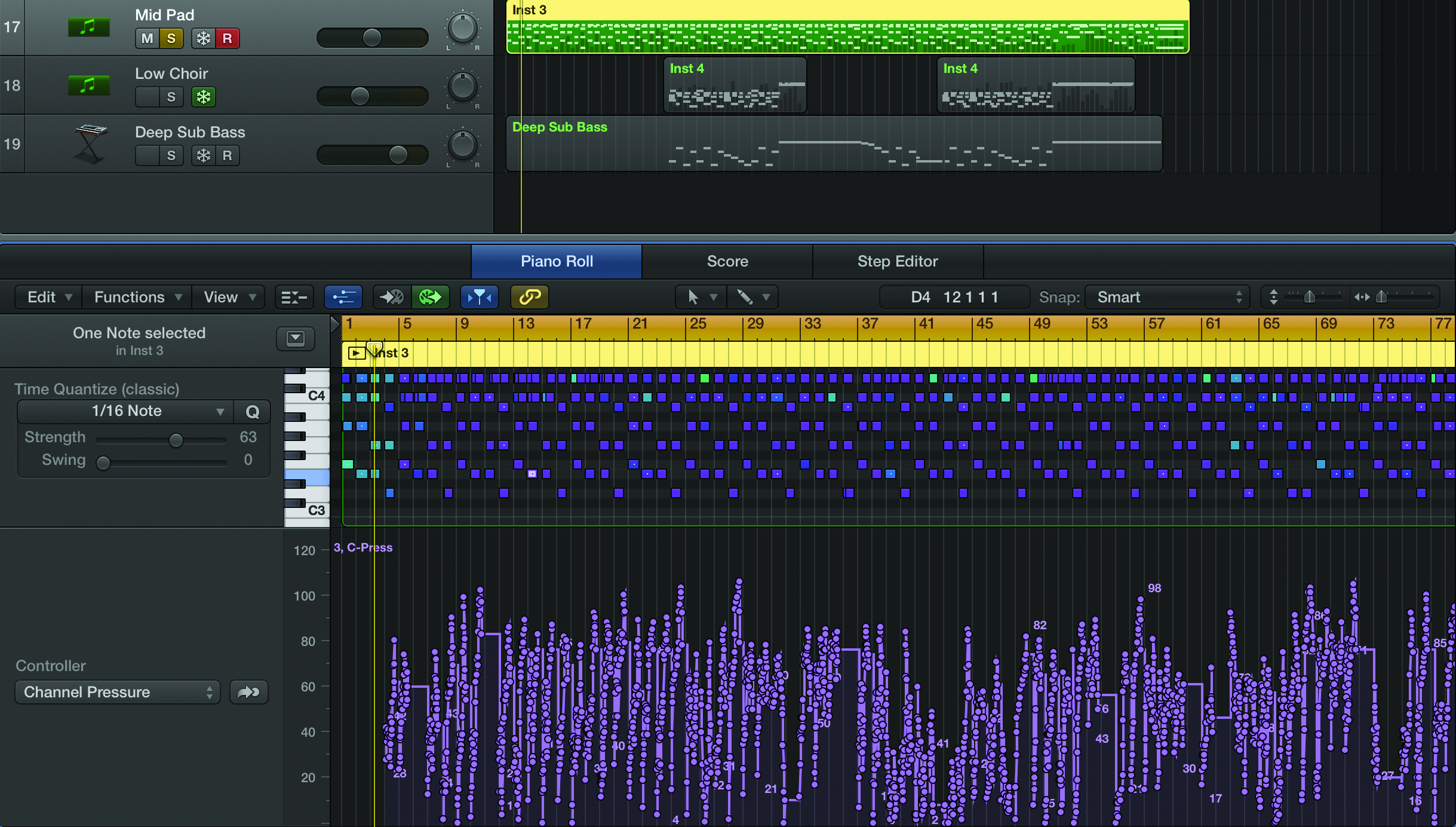

Perhaps the bigger and more important conundrum relates to how one would edit per-note modulation once you’ve got it sequenced into a DAW, regardless of the synth engine you’re using. Being able to edit a performance is partly why MIDI is so powerful, and is a functionality we’re all largely dependent on.

Now, imagine how someone might represent pitch bend on a note-by-note basis inside of a MIDI editor window. It’s another one of the big issues MPE faces by existing in a MIDI-driven world and only further delineates MPE from MIDI. Although they are related, they truly are not the same.

Editing MPE data is a head-scratcher for most DAWs and from what I can tell, Bitwig is the only software thus far that has attempted to answer that question in any tangible way. The workflow for editing MPE data in both Bitwig and Logic that I have encountered is challenging to use, but you have to respect the developers for even attempting to give their users access.

My conclusion is that this data wasn’t meant to be edited, it was meant to be performed. I’m really interested to see what future developments unfold with editing MPE data, because I think that would open up the floodgates.

MPE in the spotlight

The last questions left to answer are: “When and where would I use MPE?”. I think this answer is probably different for everyone – but speaking from my own experience, I can say that I use my MPE devices all the time. For me, I find the biggest impact on stage.

MPE has such a unique sound and energy to it, and people respond to that. From an audience perspective, it’s obviously a digital tool, but you can create such unexpected textures and expressive motifs that people are fascinated. Not to mention, the majority of these controllers look like nothing anyone’s ever seen before, so visually, it’s very interesting. With a lot of tech, there’s a huge knowledge gap between what a performer is doing and how the audience relates that to the music they’re hearing, but with MPE devices, the opposite is true.

Secondly, I’m using it to compose and produce. For me, both composing and producing are about properly conveying an emotion, whether that comes in the form of properly setting another person’s vision or choosing the right tools to tell my own story. To go back to the previous metaphor, working with MIDI feels like I’m restricted to using four-letter words to describe something, whereas MPE gives me a whole range of adjectives…

Click here to continue to our MPE controller product guide