The Essential Guide to Vocal Effects

The human voice is the most popular sound in music. Over the years we’ve tried to tune it, abuse it, affect it and vocode it, and we’re still doing it to this day. As a response to that here’s our essential guide to vocal effects (and how to get that robot voice)… The history of […]

The human voice is the most popular sound in music. Over the years we’ve tried to tune it, abuse it, affect it and vocode it, and we’re still doing it to this day. As a response to that here’s our essential guide to vocal effects (and how to get that robot voice)…

The history of the vocal effect can probably start with the human voice being reverberated within a cave… so it’s quite a long one! For practical reasons, then, we’ll ignore the first tens of thousands of years and fast forward to the 20th Century. In the 1930s the Talk Box was developed by Alvino Rey (said to be the Grandfather of the Butlers in Arcade Fire) who employed the device in a novelty ‘talking guitar’ act with his wife Luise King.

The Talk Box has had various forms over the years but is, in essence, a plastic tube that amplifies a sound. When put in a mouth that sound can be physically ‘shaped’ by the mouth to create anything from a wah-wah effect to a vocoder like sound.

Check out Stevie Wonder demo-ing one in this clip https://www.youtube.com/watch?v=PnR19INlXV8. He uses it rather like a vocoder is used today, combining it with his keyboard to get the pitch but using his mouth to create the effect.

Box to vocoder

The Talk Box sound has been used in a lot of music over the years, most notably by Todd Rundgren, Peter Frampton, Guns N’ Roses and Bon Jovi, and even contributed to the voice of BB-8 in Star Wars: The Force Awakens.

Its electronic cousin, the vocoder, was also developed in the 1930s but this time for more scientific rather than novelty purposes; it was first used for speech transmission using lower bandwidths. It took Bob Moog and Wendy Carlos to see its more musical possibilities in the technology and they developed a vocoder that was used in the score to A Clockwork Orange in 1971.

The vocoder effect was arguably properly displayed for the first time by Kraftwerk with their 1974 opus Autobahn. This chugging surprise hit bought the sounds to a larger audience. From there it was popularised even further by the likes of ELO, Pink Floyd and Alan Parsons during the rest of the 70s.

We’d argue that Laurie Anderson’s 1981 hit O Superman was the first track to demonstrate its full, powerful beauty as an both an effect and an instrument.

Anderson’s ethereal off world alien vocals were the polar opposite to Kraftwerk’s regimented robot vocoder but, either way, the vocoder was suddenly big business. Many companies were now involved in creating hardware for vocal effects, including EMS, Eventide, Roland and Boss.

The vocoder effect has since been used by everyone from Afrika Bambaataa to Radiohead, Daft Punk to Coldplay, and its popularity has meant that there is now plenty of current hardware and software that is capable of creating that sound.

More recent hardware vocoders have included the Korg MicroKorg S synth, Roland JD-Xi synth, Boss VO-1 effects pedal, Roland VP-03, TC Helicon VoiceTone Harmony-G XT, Novation MiniNova synth and BOSS VE-8 effects processor.

Do you believe?

Vocal effects have either been used to turn the human voice into a non-human voice, create a human voice from a computer (Yamaha’s Vocaloid), retune a vocal or use extreme tuning as an effect. Now, of course, we’re talking Auto-Tune which was originally developed by Antares over 20 years ago as a pitch correction plug-in.

Thanks to Cher’s 1998 hit Believe, Auto-Tune is often thought of as ‘the Cher effect’. The producers of that song, and a slew of others since, used its pitch correction features more as an effect – not unlike a vocoder effect – to almost force the pitch correction from note to note by employing its extreme ‘zero setting’ which Antares thought no one would ever use.

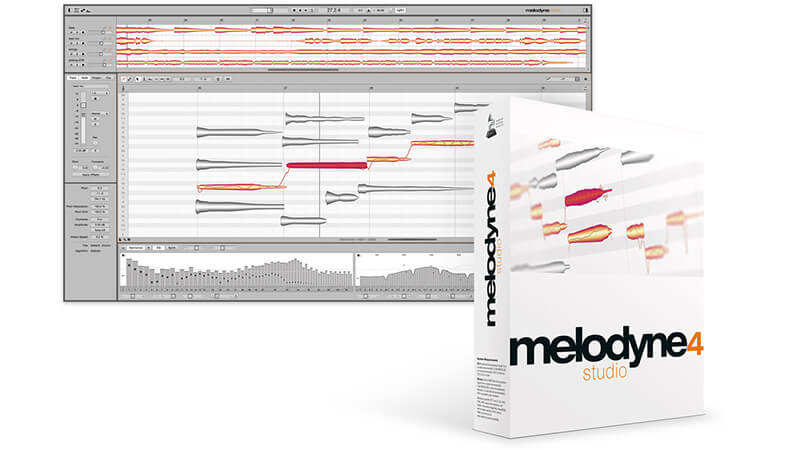

Celemony’s Melodyne is a great vocal (and any other audio) tuning processor and has also been used as a creative tuner over the years. It can also let you dive right into a mix and sort out tuning (or other) issues in real time.

The merits of using Auto-Tune or software like it have been argued one way and then the other by just about everyone in the music business over the last two decades, with many claiming that it’s made vocalists and producers lazy.

As a technology magazine we’ll always go with the tech and leave it up to the humans to use it ‘correctly’ or ‘incorrectly’ – it’s just one of many tools for vocals that we can all now enjoy and, like most of them, its creative use is limited only by that of its user.

It’s all gone soft

Harmony effects were also big business back in the day, with the likes of TC Helicon and Eventide supplying hardware that not only vocalists used to fatten their own voices with automatic harmonies, but also guitarists and anyone else after a quick and easy musical fix.

Nowadays the same companies and more are responsible for producing harmonizing plug-ins in software and software has pretty much caught up with hardware in pretty much all aspects of vocal processing. Most vocal effects can be created by cost effective plug-ins like iZotope’s VocalSynth, Melda’s MHarmonizerMB, FabFilter Pro-DS, XILS-lab XILS Vocoder 5000 and Antares AVOX 4.

Thankfully there is now a whole new generation of users enjoying these effects and being incredibly creative with them and, like the best effects, vocal plug-ins have jumped the gap, from being practical and hands-on tools, to being creative instruments in their own right.

6 Vocal Effect Tips and Tricks

1. EQ basics

With vocals within fairly complex mixes, it’s a good idea to roll off the bass end to avoid clashes with other elements in the mix – not so important if it’s a sparse vocal-only mix. Roll off below 100-150Hz first. You can always boost the vocals a little in the 3-6kHz range if needs be later.

2. Compression basics

With your compressor, you are setting a threshold on the level and if your vocal goes over that it will automatically be reduced in volume by a ratio at a speed (Attack) you set, so mastering your compressor settings will give you smoother vocals. Start with low (fast) Attack and Release times and a ratio between 5:1 and 10:1.

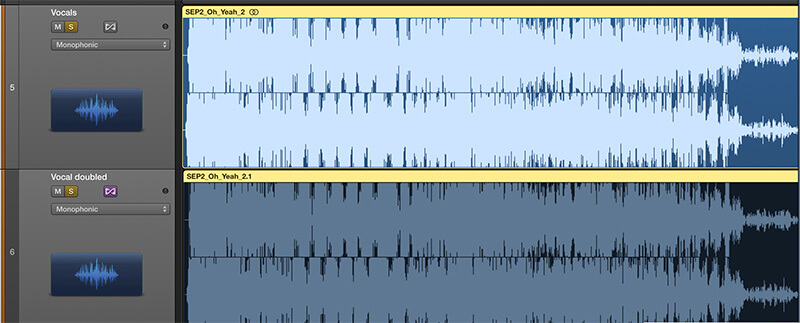

3. Double up, quick time

Need some extra girth in your vocal? Simply copy one take and double it up to artificially double track it. You can get away with this on other instruments in a mix, but with vocals you can save yourself a serious amount of vocal recording time by doing this and then processing the second track with a touch of delay (25ms) or fine-tuning to maybe even get some harmony going.

4. Delay basics

The rule with using delays on vocals – on anything, in fact – is not to go too mad. But if you add a delay of around 120ms with a touch of feedback you can instantly add a lot more impact to any vocal. The amount you choose will depend on the tempo of your track but start here and experiment.

5. Vocode!

You might well be sitting on a pretty damn good vocoder already. Many DAWs come bundled with lots of great effects, some with filters and modulators that can produce that vocoder effect. Logic, for example, has the Evoc 20, with robots galore.

6. Tune!

It is, of course, possible to tune your recorded vocals note by note with any audio editor. But, frankly, life is too short and dedicated editors like Celemony’s Melodyne make it a lot easier. Fortunately DAWs like Tracktion ship with a version of the software and, like Auto-Tune, you might wonder how you ever worked without it.