Tracing the origins of synthesis

Now a staple element of any music producer’s arsenal, the venerable synthesizer was born out of an eclectic assortment of innovations.

It isn’t easy to identify the first-ever synthesizer. Some would point to the theremin and Ondes Martenot, both released in 1928, and both inspired by and based on the radio technology of the day. The two instruments were very different: where the Ondes Martenot was intentionally designed to sound cello-like, the theremin – not least thanks to the unique way it is played – produced a sound that was all its own.

However, neither instrument could have significant modifications made to the sound they produced and some would say that this lack of programmability means these instruments are just that: instruments in their own right and not synthesizers at all. But by this measure, one would have to start considering whether pipe and steam organs are in fact synthesizers, albeit mechanical rather than electrical ones (they probably are!).

Thinking about what defines a synthesizer can be somewhat academic, but it highlights a fundamental question about sound synthesis: is the aim to emulate the sound of real-world acoustic instruments, or is it to generate purely synthetic sounds that no acoustic instrument could create? The answer to this is, of course, that sound synthesis can mean both of these things; nevertheless, it is the desire to create realistic emulations of acoustic instruments that has driven the most significant developments in the field of sound synthesis and for a long time, the ability to create unique synthetic sounds was really just a happy side-effect of the technology.

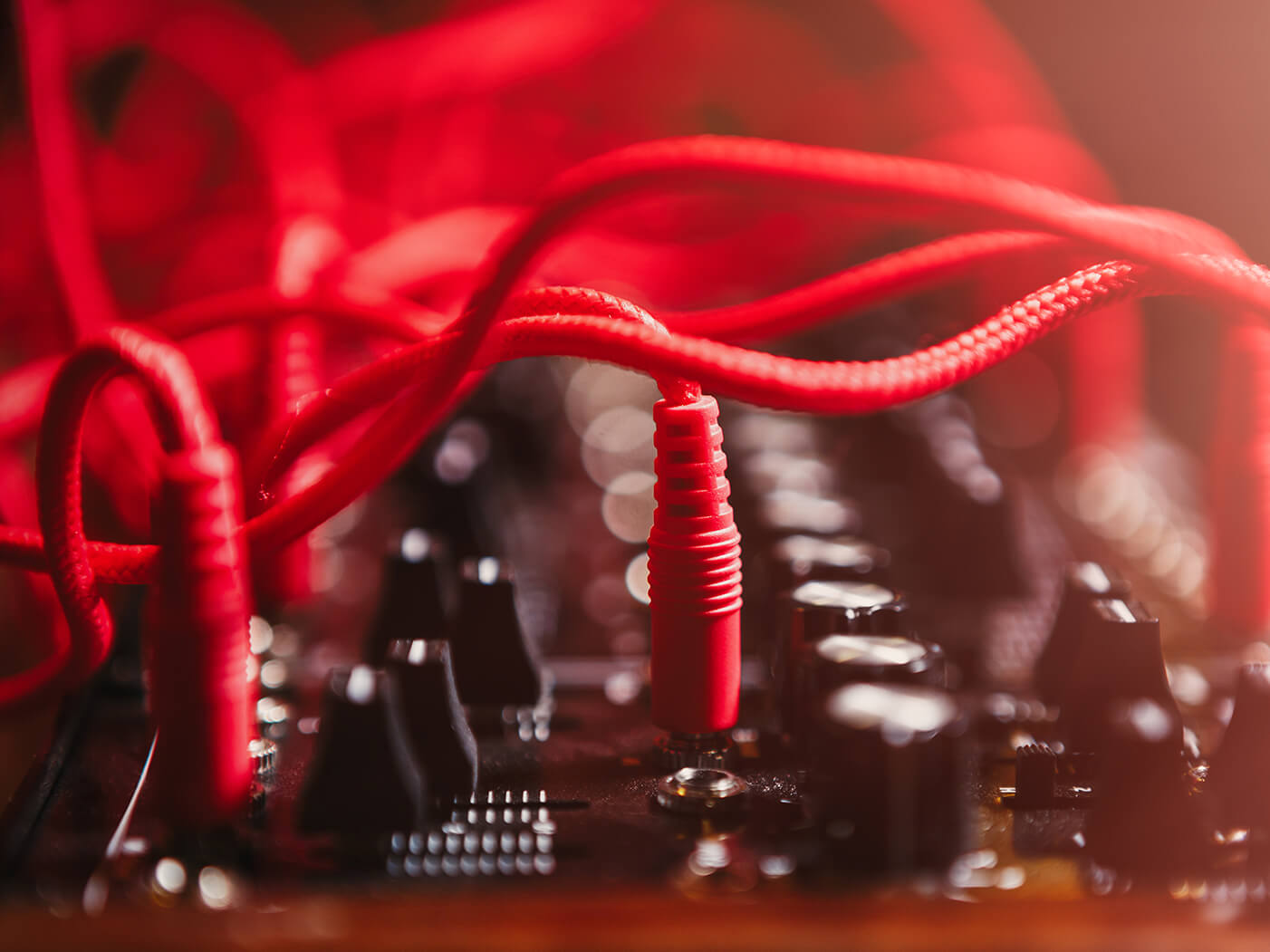

Serious research and development of sound-synthesis techniques really got going in the 1950s, when semiconductor technology started to replace the bulky and expensive vacuum-tube-based circuitry of the first half of the century. The compact, low-cost, low-energy nature of these new components made possible the creation of new types of circuit, and audio researchers understood that these circuits could be combined to create a practical and controllable method of sound synthesis: subtractive synthesis.

Early synthesizers were monophonic; that is, they only had a single voice and so could only create one note at a time. When polyphonic synths first arrived, they were often extremely expensive. This was simply because creating, for example, a four-voice polyphonic synth required, give or take, four times the circuitry of a monophonic instrument.

Of course, the biggest benefit of polyphonic synths was and is the ability to play chords, but they also had another benefit over monophonic instruments, namely unison mode. This stacked the polyphonic voices into a monophonic mode, so that all played simultaneously, while also applying detuning to each voice. This makes the instrument feel like a mono synth to play, but gives a much thicker, fuller, richer sound than can be delivered by a true monophonic synth.

Control voltage and gate

In these MIDI- and USB-infused days, where controllers, synths and sequencers all happily interconnect with one another, it’s easy to forget that early synths had no such digital control systems. Instead, playing a key would create an electrical signal called a control voltage or CV, and the voltage of this signal would determine the frequency that the oscillator(s) would produce. Similarly, a voltage would be used to open and close the synth’s gate, the system by which it registered note on and off information.

The CV/Gate system was riddled with problems. Firstly, every manufacturer had their own particular implementation so interconnecting devices was far from straightforward, if not impossible. But more importantly, the nature of the analogue circuitry meant that a synth’s response to CV signals could be affected by environmental conditions – heat, humidity and so on – resulting in unstable tuning and unpredictable responses of filters and envelopes. No wonder MIDI was such a hit!

Check out our guide to subtractive synthesis here.