Harmonics, Timbre and Recording Tutorial

Harmonics are the key building blocks of sound, and are essential to rationalising the art of recording. Mark Cousins puts sound under the microscope.

The DNA Of Sound

Like a number of Bass-enhancement tools, Waves’ MaxxBass achieves its bass-lift through modifications to the second and third harmonics

Looked at on a keyboard, this rise through the harmonic series creates overtones that are increasingly bunched together. The second harmonic, for example, is always an octave higher than the fundamental, forming the widest difference in pitch. The third harmonic, however, is seven semitones up from the second (a D, in other words), while the fourth is only five semitones (G, two octaves above the fundamental, in this case). From a musical perspective, it’s interesting to note how these initial harmonics (particularly the second, third and fifth) form the notes of a major chord, which explains the particular ‘mathematical sonority’ of a major voicing.

As you move to the top of the harmonic series, the overtones become dense clusters of notes. Thankfully, though, each step above the fundamental is also met with a fall in amplitude – in short, the volume decreases the further you move up the harmonic series. What’s interesting, though, is that the rate of attenuation as you move up the harmonic series, and the relationships between odd and even harmonics, is what forms the principal timbre (tone colour) of an instrument. A clarinet, for example, has strong odd-ordered harmonics and very few high-ordered harmonics, yielding a slightly hollow sound but one that has a pleasing purity to it. A trumpet, on the other hand, has a rich collection of odd and even harmonics, making it appear brighter and raspier than the clarinet.

Sounds are considered ‘non-musical’ when they contain harmonics that aren’t musically related to the fundamental frequency, although this doesn’t mean they aren’t useful in music per se! In actuality, non-musicality is a sliding scale. Bell sounds, for example, have a degree of inharmonicity caused by some of the overtones not being multiples of the fundamental frequency. Drums, on the other hand, have both a pitched element but also a collection of random harmonics known as noise. Noise, of course, has no pitch, but is still musically useful as a means of defining rhythm.

All Things Equal

Having established the rudimentary principles of harmonics, let’s look at how harmonics directly impact on the process of recording. Arguably the most important tool for ‘harmonic modification’ is the humble equalizer – a signal processor that enables us to modify the respective balance of frequencies (and, therefore, the harmonic balance) within a given audio signal.

When it comes to using EQ, it’s interesting to note how we make a distinction between boosts applied to the fundamental frequencies of a given instrument –often contained in the low to middle portion of the audio spectrum – and its ‘colour’, higher up the spectrum. Even an instrument as high as a flute, for example, produces its highest fundamental around 2kHz (a very high D), so any boost above 2kHz or so is largely to do with the overtones of an instrument. Likewise, when we boost bass around 100Hz, we’re directly lifting its fundamental frequency rather than modifying its overtones.

One of the most interesting quirks with harmonics, though, is our ear’s ability to ‘fill in the gaps’ of the lower end of the sound spectrum and hear fundamental frequencies even though a speaker might not be able to replicate them. This is perfectly illustrated by laptop speakers, which have extremely limited bass response, often with a sharp roll-off around 200–250Hz. Even with this limited bandwidth, our ears can still perceiver the existence of the bass by the presence of the second and third harmonic. Extending this concept to its natural conclusion, a large number of ‘bass-enhancement’ tools, like Waves’ MaxxBass, directly make use of the 2nd/3rd bass harmonic as a means of increasing our perception of bass without adding low-frequency energy.

Here are the first eight harmonics in the harmonics series in notation form. Notice how the distance between each harmonic decreases the further you move up the harmonic series

Soft Saturation

Probably the most interesting application of harmonics in recoding practice is the role and musical contribution of distortion, also known as non-linearity. Whenever a waveform is distorted – whether it’s soft valve saturation, for example, or an A/D converter slicing off the top of a waveform – the harmonic structure of a sound is changed. Of course, adding an increasing amount of distortion adds an increasing amount of harmonic information, but no two devices distort in the same way.

What’s particularly interesting about distortion, therefore, is how the varying non-linearities of different studio devices affect the additional harmonics that are created. Not surprisingly, the best form of distortion is so-called Harmonic Distortion (seen on spec sheets as THD), whereby the added harmonics are musically related to the input. In addition to his, though, there’s also differences between valve devices that have a bias towards even-ordered harmonics, and solid-state distortion and tape saturation that veers towards odd-ordered harmonics. Inharmonic distortion, also known as Intermodulate Distortion, is much less desirable as this adds harmonic material that isn’t musically related, which also explains which digital distortion (which often has a propensity for inharmonic distortion) often sounds so bad to the ears.

Although technically undesirable, it’s interesting to note how harmonic distortion can positively contribute to the enjoyment and musicality of a recording. Adding additional harmonic information can add body to the sound, and often explains why engineers prefer recording through ‘colourful’ preamps with valves or transformers. On bass guitar, the added 2nd and 3rd harmonics of a small amount of soft saturation can make a big difference to how well the instrument carries over small speakers, although too much colour can make the instrument clash with other instruments in the mix.

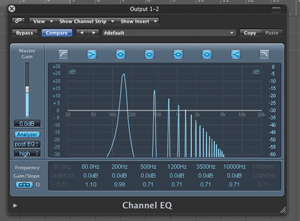

A Fast Fourier Analysis display on an equalizer will enable you to identify the harmonic profile of your signal, including the fundamental frequency and associated harmonics.

Perfect Harmony

More than being just the science behind sound, harmonics are a way of rationalising the processes and techniques behind recording – why we might to choose to boost at a given frequency, for example, or the reason for choosing one preamp over another. Almost every decision we make as sound engineers has an impact on the harmonic construction of our output, so it’s essential that we consider the actuality of what we’re doing in a harmonic sense, as well as carrying out modifications simply because they ‘sound good’. Although our ears should always be the final judge, it’s always the harmonic content that they’re referring to – whether on a conscious or subconscious level…

Here’s an interesting tutorial from SynthSchool: