Ten Minute Master – Sample Streaming

Sample-streaming and the associated technologies are vital facets of the modern-day software sampler. Mark Cousins makes his voice count… Solid-state drives blur the line between traditional hard drives and RAM, offering incredibly fast access times that allow for large voice counts What the pioneers of sampling would make of today’s sample-based virtual instruments is hard […]

Sample-streaming and the associated technologies are vital facets of the modern-day software sampler. Mark Cousins makes his voice count…

Solid-state drives blur the line between traditional hard drives and RAM, offering incredibly fast access times that allow for large voice counts

What the pioneers of sampling would make of today’s sample-based virtual instruments is hard to imagine – the layers of delicate velocity switching, multiple round-robins and audiophile sound quality would have been something of a pipe dream. Certainly, we’ve made a huge jump from the crunchy 8-bit samplers of old, but the technology that has powered this change (and the advancement in virtual instruments) has been sample streaming. Like many other ‘background activities’ it’s easy to ignore sample streaming, but for those who are seeking to get the best from their music production workstations, having an understanding of the principles and practicalities of the modern-day sampler and how it works with sample data is vital.

The Hard Way

By comparison to today’s ubiquitous software samplers, the original hardware-based samplers of yesteryear had incredibly limited technical specifications, and, most notably, limited RAM resources. On average, most samplers were fitted with around 750KB of memory, with a whopping 2MB being seen as a relative luxury. Given these pitiful memory resources – which generally yielded around only 30–60 seconds sampling time – developers needed to think wisely about how they used what memory was at their disposal. Techniques such as velocity switching, although technically possible, were rarely used, and extensive use of looping was a necessity for any sound that sustained or had a long decay (cymbals, for example).

Later developments in hardware sampling ushered in greater amounts of RAM, although the total amount rarely exceeded 32MB. While this improvement helped instruments like drums, it was still incredibly meagre when it came to creating realistic multisamples of large instruments such as piano, where just a single velocity layer (more than 88 notes) would rapidly eat up the available sampling time.

The Giga Revolution

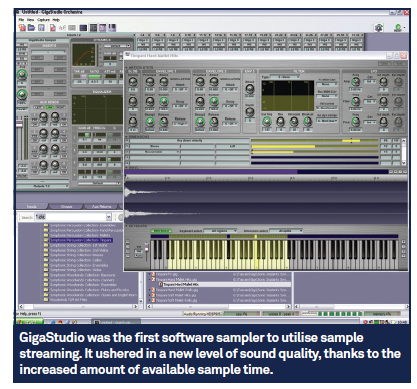

Arguably one of the most significant revolutions in sampling came about with the introduction of NemeSys’ GigaSampler, which latterly became known as GigaStudio. GigaSampler was unique in that it was the first sampler to offer the technology of sample streaming, a process that dynamically combined both RAM and the computer’s internal hard drive as a means of triggering sample-based instruments. Previously, samplers made use only of RAM, which led to the aforementioned restrictions with available sample time, whereas a system that dynamically combined both RAM and a hard drive could conceivably gain access to an almost limitless amount of sample time.

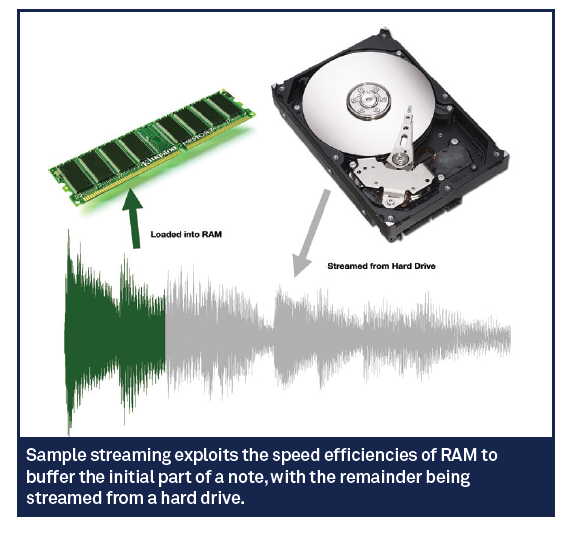

In understanding the processes and practicalities of sample streaming, it’s important to make the distinction between the use of RAM and the additional benefits brought about through the introduction of a hard drive. The principal benefit of RAM is that it’s fast – data can be accessed almost instantaneously, making it ideal for triggering samples in real time. Rather than loading the whole sample into RAM, though, sample streaming makes use of a buffer, so that only the first few seconds of the notes are loaded into RAM, with the remainder being streamed from the drive as and when required.

By using RAM as a buffer, a hard drive is given the precious few milliseconds it needs to read and play back the rest of the sample data. In theory, sample streaming is a ‘best of both worlds’ solution, combining the speed of RAM with the near-infinite sample duration that a hard drive offers. Not surprisingly, then, software samplers such as GigaSampler ushered in an exponential leap in quality, removing the necessity for sample loops and offering new possibilities such as round robins, complex velocity switching and keyswitching.

Random Access Memories

Having understood the principles of sample streaming, it’s worthwhile taking note of the practical implications, particularly with respect to hard drive usage. Of course, even though the hard drive supplies the majority of the data needs, access to a plentiful amount of RAM is still essential. While a suggested ‘minimum specification’ will indicate 4GB of RAM as being sufficient, it’s much better to look towards installing 8–16GB of RAM if you intend to use a lot of sample-based instruments.

The other big caveat is the speed of the hard drive, both in respect to the drive itself as well as aspects such as fragmentation, capacity and connection protocol. For example, most laptops ship with energy-efficient drives that have a maximum rotation speed of 5,400RPM, whereas desktop computers are usually fitted with faster drives running at 7,200RPM or above. Of course, if you’re spec’ing a laptop for music usage, it may well be wise to opt for a faster internal drive, even if it means your battery life takes a proportionately greater hit.

The other fundamental issue with drive access is the connection protocol used to ‘attach’ the drive to your computer. A fast internal SATA drive will always be preferable, as this has direct access to the motherboard, but if you opt for an external drive you should look towards using one of the faster connection protocols – FireWire 800, USB 3.0 or Thunderbolt protocol. For real speed freaks, there’s also the possibility of running two drives in a RAID 0 array, which effectively doubles the access time by spreading data across two drives.

Drive Use

When it comes to the drive itself, care should also be taken in respect to its use and capacity. If the drive is being used for tasks in addition to sample streaming, there’s an immediate issue in respect to multiple sources needing to access the drive simultaneously. Rather than using a system drive for sample streaming, therefore, it’s worth keeping all your sample data on a separate drive, even if it means using an external hard drive.

Other issues that affect drive speed are the capacity of the drive and how much data is stored on it. Generally speaking, drives are at their most efficient when no more than half-full. Over and above this, files can start to be fragmented, which restricts the speed at which the computer can access them. The knock-on effect of a slow drive isn’t necessarily the number of sample-based instruments you can load into your computer, but the number of voices you can stream concurrently.

Sample Streaming Today

Although the principles of sample streaming have remained largely unchanged since the introduction of GigaSampler, it’s interesting to note how changes in computing technology have forced a degree of re-evaluation. One of the biggest developments has arguably been the introduction of 64-bit processing, which offers access to amounts of physical RAM that early users of GigaSampler could only have dreamed of. To some extent, therefore, buffer sizes can be much greater and therefore the drive usage minimised, assuming you install plenty of RAM into your machine.

Another significant shift has come in the form of solid-state drives (SSD), which use Flash RAM rather than a spinning magnetic platter to store data. The main application of SSD drives has been in laptops, where their lightness and resilience to physical shock makes them an ideal choice, but they’re also popular with those wanting high voice counts with a virtual sampler. Access times are much lower than those of hard disk drives, although capacity tends to be slightly more restrictive.

Sampling The Future

Advances in data storage and retrieval have ushered in major changes in respect to the sonic quality of virtual instruments. However, as technology evolves and RAM becomes less of a precious asset, techniques like sample streaming could become old-hat. What might replace sample streaming isn’t clear, but what is for sure is that the quality and versatility of sample-based instruments has yet to plateau – and the best is yet to come!